Preserve Requirements across Abstraction Levels

Meta-Information

Origin: Udo Brockmeyer, Darren Sexton / RICARDO, Brian Nielsen / Aalborg University

Written: October 2014

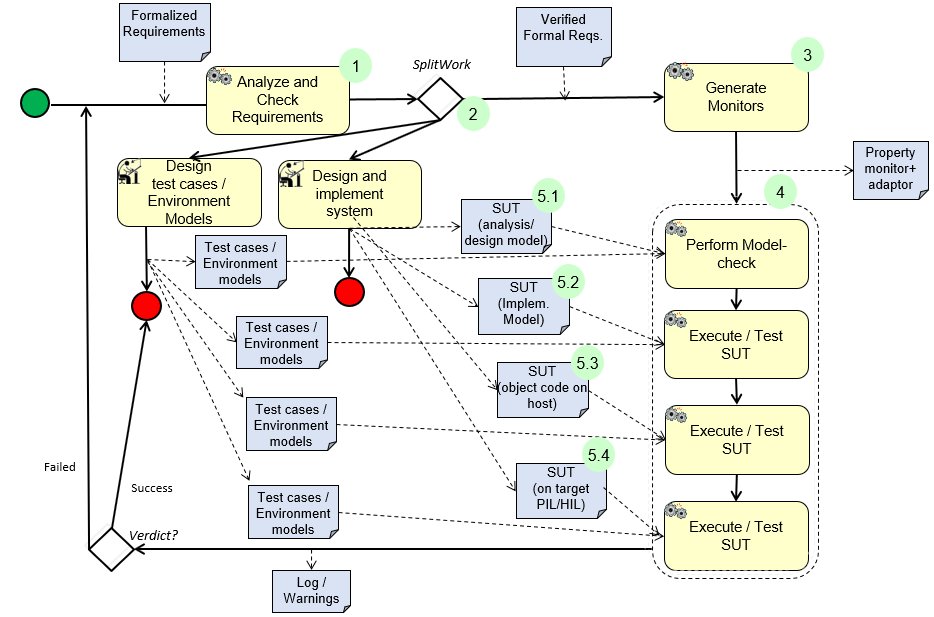

Purpose: Preservation of requirements and properties through (static model-check and dynamic runtime) verification across system- and abstraction-levels. This is most frequently achieved by generation of monitoring automata (e.g. Büchi-automata) from the (semi-) formal requirements or logical properties. The monitors receive events and state information from the adaptor and checkes whether the automata may enter a reject state, in which case the property is violated.

Context/Pre-Conditions: The pattern is in particular usefull when the required adaptors for the monitors can be auto generated to suit the different levels of abstraction. The technique may not be deductively sound, as monitors are only checked against the behavior they actually observe. For the runtime verification this depends on the limited behavior revealed by test cases (or environment simulations). For more abstract models, exhaustive static model-check may be possible wrt. to a given environment model.

To consider: It is required that the user is able to formalize the requirements sufficiently precisely to allow a monitor to be synthesized. After this the required use expertise is low for runtime verification given that the monitors that generated automatically. Debugging the cause of a failing monitor may be time-consuming, in particular if it happens after a long execution run.

Structure

Participants and Important Artefacts

V&V Engineer: Responsible for ensuring that overall requirements are accurately formalized and satisfied at all system level.

Developers: Responsible for constructing the detailed designs and implementation.

Test Engineer: Responsible for developing test cases, environment models, environment simulations at the involved levels.

Monitor: The monitor is a „runtime“ component (e.g. Büchi-automata) that receives events and state information from the adaptor and checkes whether the automata may enter a reject state, in which case the property is violated. The monitor is normally generated automatically from the (semi-) formal requirements or logical properties.

Adaptor: Responsible for detecting the relevant system state changes and events, and abstracting these into a form, needed by the monitor.

.

Actions / Collaborations

(1) It is assumed that the V&V Engineer, Test Engineer, and Developer jointly have formalized requirements, and that these are verified prior further activities (otherwise there will be more debugging work to find out whether the defect is in the system or monitors/requirements).

(2) The pattern is agnostic about the exact order in which the different system levels are developed – see the „split work“ fork.

(3) The „monitor“ and its „adaptor“ are generated for a particular system level.

(4) When the tests (or environment model), system level, and monitor is prepared, the system is executed and the monitor checks the received events against the underlying accepting/rejecting conditions, and issues a warning if the property is violated.

(5) In the pattern 4 abstraction levels are identified. Not all need to be present:

(5.1) Analysis or Design Model: A relatively abstract model capturing the essential behavior of the system and its requirements.

(5.2) Implementation model: A model so concrete that code may be generated from it (potentially) automatically (MiL).

(5.3) Object code executing on the development host (SiL test).

(5.4) Code executing on target (PiL/HiL) . Presumes that the resource overhead of the monitor is sufficiently low (not exceeding memory or CPU constraints of the target).

.

Discussion

Benefits: It is automatically checked that formalized requirements (and properties) are satisfied at all system abstractions.

.

Application Examples

MBAT Automotive Use Case 2 (Common Powertrain Controller (CPC)):

Participants / Artefacts:

BTC Embedded Specifier

Common functional requirements

Analyse and check requirements

Goblint, Astrée

Proof obligations (generate monitors)

C-Observer

for target systems

MBAT Automotive Use Case 5 (Transmission Controller Product Line) / SC03 (Automatic TCG):

Participants / Artefacts:

Ricardo

Applying method in use-case context

Developing detailed internal guidance

BTC-ES

Developed method

Developed tool-chain:

– BTC-EmbeddedSpecifier to formalise requirements

– BTC-EmbeddedValidator to prove (by model-checking) monitors on models

– BTC-EmbeddedTester to test with montiors running in different test environment

MBAT Aerospace Use Case 5 (EADS „DeViLA“) / SC04 (Virtual Testing (MiL), Model Simulation), SC09 (Usage of Formal Analysis Model):

Participants / Artefacts:

V&V Engineer

Develops test model from system requirements (IBM Rational DOORS, MaTeLo PLM)

Identfies subset of input NLRs and formalizes them (IBM Rational DOORS, BTC Embedded Specifier) and define contracts, apply these contracts to the system model and generates C-Observers

Developers

Develops system model (IBM Rational Rhapsody)

Test Engineer

Develops test cases and defines test suites (MaTeLo Testor, MicroNova EXAM

Monitor, Adaptor

Observers are generated serving as monitors while running the test cases against the SUT

.

Relations to other Patterns

| Pattern Name | Relation* |

| Requirements Formalization | That pattern eases work of this pattern |

| Closed-Loop Testing | Like “Closed-Loop Testing”, this pattern also deals with MiL/SiL/HiL |

| all other AT-patterns | should consider this pattern |

* “this pattern” denotes the pattern described here, “that pattern” denotes the related pattern

Like Closed-Loop Testing, this pattern also deals with MiL/SiL/HiL