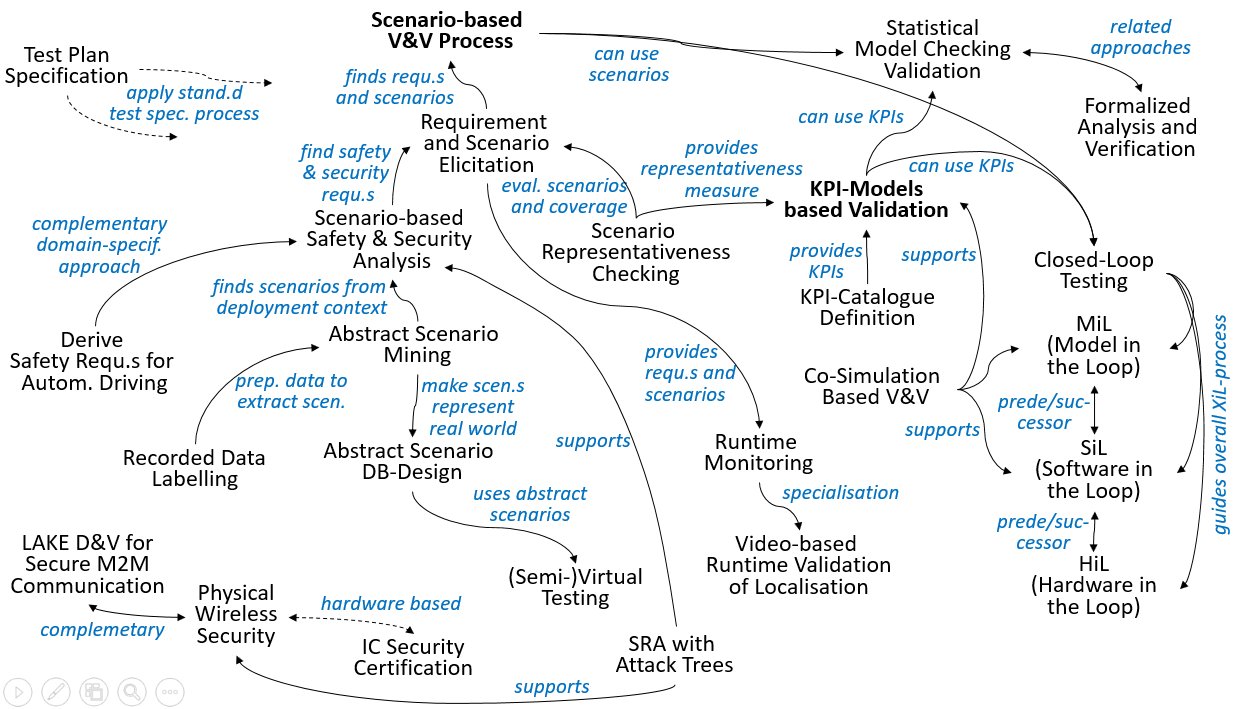

The V&V-Patterns

Following diagram indicates the most relevant relations between the patterns described here. Bold pattern names indicate new main patterns for testing and validating ACPS. The table below the diagram gives short pattern descriptions.

| Pattern Name | Purpose |

| Test Plan Specification | using a generic template based on ISO/IEC/IEEE 29119-3 |

| Scenario-based V&V Process | Whole process from operational scenario mining over requirement elicitation and abstract scenario design to verification |

| Requirement and Scenario Elicitation | Combined elicitation of requirements and the scenario in which they have to be satisfied |

| Abstract Scenario Mining | Mining of abstract scenarios covering the deployment context of a SuT |

| Abstract Scenario DB Design | Creation of a set of abstract scenarios and models which should represent the real world |

| Scenario Representativeness Checking | Quantification how well a set of abstract scenarios represents the real world |

| Scenario-based Safety & Security Analysis | Refinement of functional requirements into safety and security requirements and corresponding abstract scenario |

| Derive Safety Requirements for Autonomous Driving | Scenario- and fault-tree-based pattern for functional safety analysis according to the ISO 26262 |

| Formalized Analyis and Verification | Verify that the design of a system or procedure involving dependability, e.g. security, requirements is indeed dependable |

| KPI-Model based Validation | Apply Design-of-Experience based behavior models in order to reduce the number of test runs needed to validate a SuT |

| KPI-Catalogue Definition | Identification of (application specific) Key Performance Indicators |

| Closed-Loop Testing | Validate the SUT (System under Test) in several closed-loop testing steps |

| MiL (Model in the Loop) | Testing a model of the SUT in a simulated environment for early assessment |

| SiL (Software in the Loop) | Testing the SUT software in a simulated environment for assessing its correct operation in a closed-loop setting |

| HiL (Hardware in the Loop) | Testing the target SUT (hard- and software) in a real-time hardware simulation environment for assessing its correct operation in a closed-loop setting |

| Co-Simulation Based V&V | Construction and execution of a co-simulation scenario for verification of a SuT |

| Semi-virtual Testing | Estimation of the risk that a given SuT violates a given catalog of requirements |

| Statistical Model-Checking based Validation | Application of statistical model checking to decision making and perception systems |

| Recorded Data Labeling | Generation of Ground Truth Data related to the relevant objects of a use case |

| Video-based Runtime Validation of Localisation | Validation at runtime of the localization component using a camera based visual method on a test track |

| Runtime Monitoring | Monitor at test environment runtime whether the SUT satisfies its specification |

| Physical Wireless Security | Verification of physical wireless secure communication between different nodes, interpreting the secure communication as a jamming resistant |

| IC Security Certification | Process for design and manufacture of integrated circuit/chip whose security features & functionality can be evaluated & certified/qualified by independent international standardization authorities |

| LAKE D&V for secure M2M Communication | Design and verification of a location-based authentication and key establishment (LAKE) process for secure M2M communication |

| Security Risk Assessment with Attack Trees | Assess potential security risks for a (to be developed) CPS using attack trees |