MiL (Model in the Loop)

Meta-Information

Origin: Wolfgang Herzner / AIT, David Gonzalez-Arjona / GMV

Written: April 2019

Purpose: Testing a model of the SUT in a simulated environment for early assessment whether the intended solution is appropriate for the given requirements and does not exhibit relevant flaws.

Context/Pre-Conditions:

– Both models of SUT and environment must have all relevant details.

– Interface of SUT and environment model required.

To consider: Modelling the environment may be expensive and require sound expert knowledge. It can, however, possibly be re-used for SiL or for other SUTs. (The model of the SUT is also needed, but it can often be used as basis for deriving the target system.).

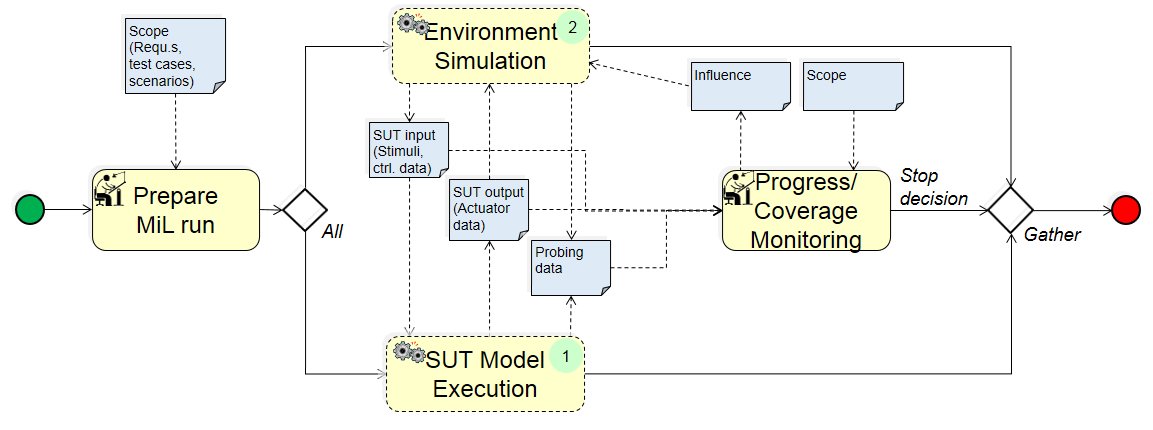

Structure

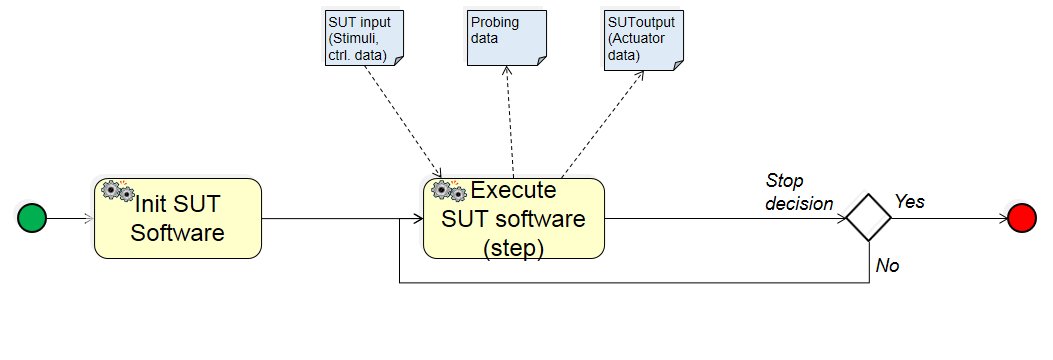

(1) SUT Model Execution:

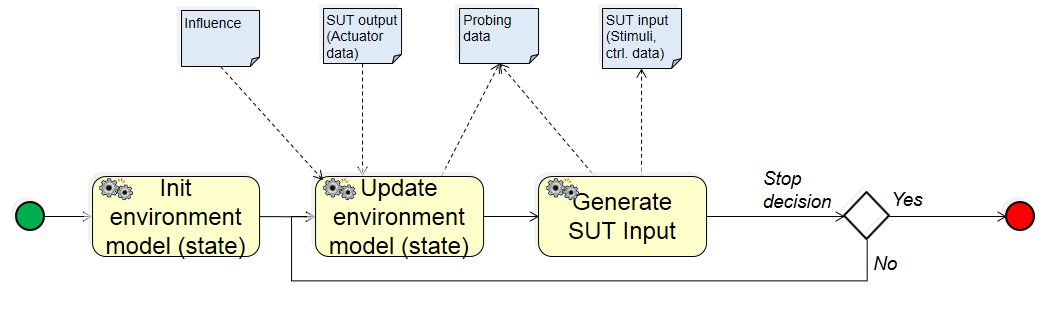

(2) Environment Simulation:

Participants and Important Artefacts

Participants:

SUT Model: executable formal description of target SUT.

Environment Simulation: executable formal description of the real world that is able to compute impact of SUT’s behavior on its environment and derive respective new stimuli for SUT (model). If the SUT is part of a larger system, the environment may include remaining parts of that system.

Engineer (expert): In charge of initializing the models of SUT and environment, monitoring progress and terminate MiL process.

Artefacts:

Scope: set of requirements, test cases, and/or scenarios that shall be addressed during the MiL process.

SUT input:

(a) stimuli representing environmental aspects measured by sensors, e.g. temperature, or “raw” data such as cameras images or radar measures.

(b) control data, e.g. provided by other components of the final system to the SUT.

SUT output (Actuator data): output by the SUT model, mainly explicitly to control actuators, e.g. amperage values for electro motors, but perhaps also implicitly, e.g. pollutant emissions.

Probing data: implicitly generated data characterizing model states; for example, SUT speed or positions of obstacles. Can include parameter that allow effective progress monitoring and requirements adherence, e.g. KPIs (Key Performance Indicators).

Influence: Means of influencing ‘evolution’ of environment for covering scope. For instance, a requirement to increase number of obstacles, or change lighting conditions.

Actions/Collaborations

MiL run preparation: Selection of initial models configuration such that all given MiL-scope can be covered.

SUT Model Execution:

– Init SUT model (state): bringing the model into the initial state.

– Update SUT model (state): whenever it receives stimuli, it computes the reaction of the SUT and calculates resulting state and output.

– Generate SUT Output: computing control parameters for actuators, based on resulting state and output, as well as other output (e.g. to other components in final system).

Environment Simulation:

– Init Environment model (state): bringing the model into the initial state.

– Update Environment model (state): whenever it receives the SUT‘s output, it computes the impact of the SUT‘s behavior on the environment, and calculates the new environment state. And whenever it receives influence, it moves its internal state into the required direction.

– Generate SUT Input: computes and outputs updated stimuli, based on resulting state environmental state, as well as further SUT control data.

Progress/Coverage Monitoring: Assessing whether MiL run stays within scope, e.g. if requirements are not violated, and whether coverage is increasing. If the latter is unsatisfying, Influence can be emanated to the environment model.

Discussion

Benefits:

– Essential properties of the target SUT can be evaluated without the need for producing any physical item or risking danger to environment.

– Support for quality surveillance during system construction.

Limitations: Development of environment model can be very expensive. And, in contrast to SUT model from which target SUT can be derived sometimes, it cannot be reused – besides for SiL and potentially other SUTs.

Comments:

Alternative execution: in the outlined workflow, SUT and environment model are executed alternatively. More overlapping execution cycles are possible.

(Im)perfect Stimuli: depending on purpose of the overall XiL process, simulated sensor inputs can be perfect or defective. The latter will be used if robustness of sensor data processing is to be tested.

(Co-)Simulation Framework (not shown for simplicity): Often, the models are linked to a co-simulation framework which takes care for data transfer (stimuli etc.) and their transformation into the required format.

Timing control: MiL is not real time. How time is brought into the simulation depends on the application. It can be assumed that each processing step takes a fixed amount of time, or each model outputs (as probing data) the time that it would have been used as physical item. (Co-)Simulation frameworks usually take care for this.

Monitoring vs. logging: Instead of online monitoring as shown in the diagram, probing data can be logged and analyzed after MiL run.

MiL (run) termination: A MiL run can be terminated in different ways: (a) after a certain round of cycles, (b) when some rule or requirement is violated, (c) if it turns out that not the whole scope can be covered, (d) the whole scope is successfully covered.

With (c) and (d), the whole process is progressed to SiL (propagating any uncovered aspects under (c)); with (b), the whole process is stopped; and with (a) either the next MiL run is prepared of the intended scope is not completely covered yet.

Application Example

ENABLE-S3 UC8 “Reconfigurable Video Processors for Space”:

xx– MIL navigation data generation providing input for the SUT

.

Relations to other Patterns

| Pattern Name | Relation* |

| Closed-Loop Testing | Description of overal XiL-process. Is super-pattern of this pattern |

| SiL (Software in the Loop) | next pattern in XiL-process |

| Test Plan Specification | Supports allocation of requirements to MiL-level |

| Scenario-based V&V Process | Supports allocation of test scenarios / cases to MiL-level |

* “this pattern” denotes the pattern described here, “that pattern” denotes the related pattern