Video-Based Runtime Validation of Localization

Meta-Information

Origin: Gustavo Garcia Padilla / Hella, Ralph Hänsel / Hella, Sadri Hakki / Hella

Written: April 2019

Purpose: Validation at runtime of the localization component using a camera based visual method on a test track. This pattern consists of a training part and an evaluation part. In the training part a high quality map with internal image features of the test track is generated during a training drive and then registered with an externally generated map of the test track which includes visual recognizable landmarks. The runtime validation of the localization component is performed in the evaluation part, where the position results of the system under test are compared to the position results of the reference system and evaluated using suitable KPIs. The reference system locates the vehicle based on the embedded landmarks while it is performing the test drive. During test drive, the ego position given by the system under test, the reference position given by the reference system and eventually a target position given by an external infrastructure like a PAM (Park Area Management)system are recorded for KPI calculation.

Context/Pre-Conditions: This pattern can be used in a test track. An external machine readable 3D map of the test track including visual recognizable landmarks is required.

To consider: The test track can be mapped by some external measuring device. The external measuring device should give a high accuracy 3D structure of test track. For registration purpose some visually recognizable landmarks should be annotated in the measured map. Additional visual landmarks might be added to the test track and mapped by the measuring device.

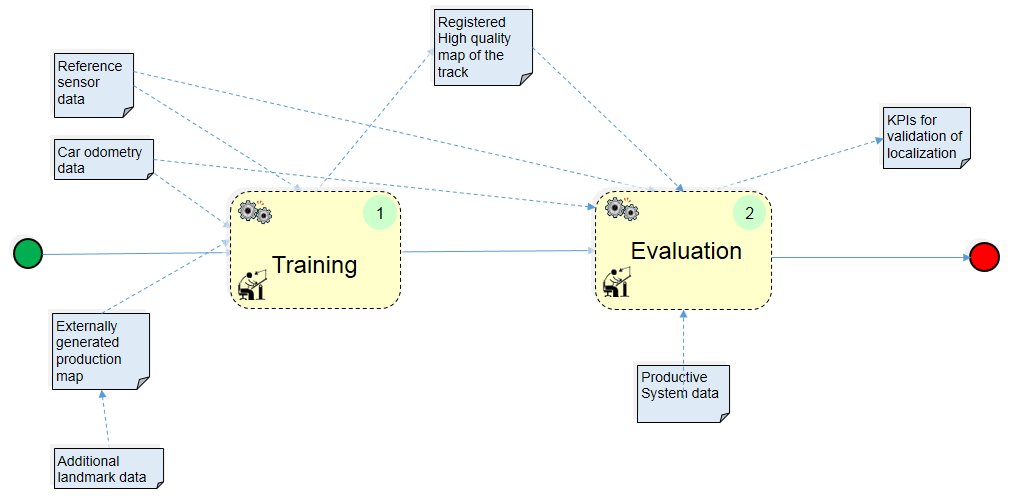

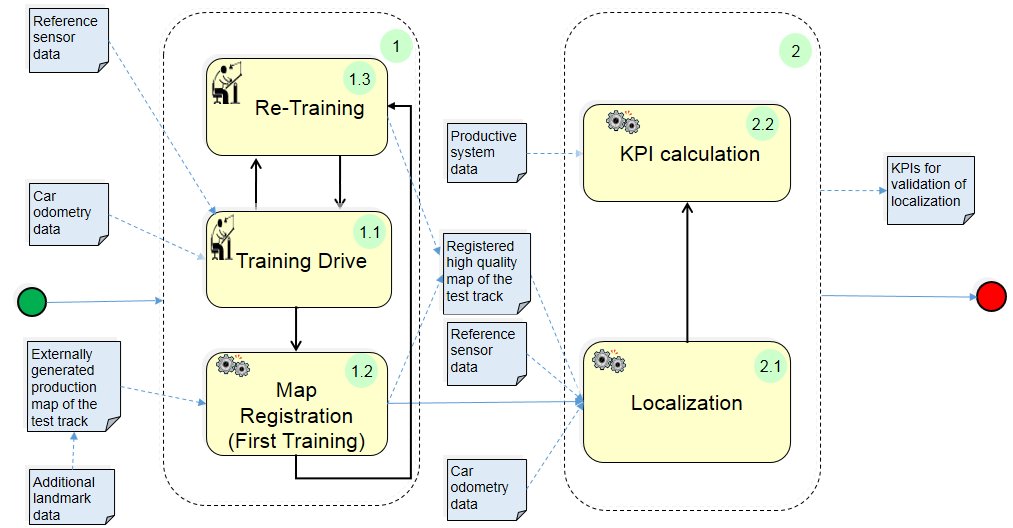

Structure

xxxxxxxxxxxxxxxxxxxxxxxxxxxxxTraining Modexxxxxxxxxxxx/xxxxxxxxxxxxEvaluation

Participants and Important Artefacts

Reference Sensor data: Camera video data including calibration data as input to the training and evaluation processes.

Car odometry data: Velocity and yaw angle as input to the training and evaluation processes.

High quality map of the track: Internally generated map with internal image features as output of the training drive.

Externally generated production map: 3D machine readable map of the test track with visual recognizable landmarks.

Additional landmark data: E.g. 2D matrix codes with positions included in the externally generated production map if not enough visual recognizabe landmarks available.

Registered high quality map of the track: Output of the registration process between internal and external maps.

Productive system data: Output of the localization system to be validated.

KPIs for validation of localization: Results of the KPI calculation and output of the pattern.

Actions/Collaborations

(1) Training:

(1.1) Training drive: The internal high quality map with internal image features of the test track is generated.

(1.2) Map registration: The internal high quality map is registered to the production map.

(1.3) Re-Training: Additional training to increase robustness of the high quality internal map.

(2) Evaluation:

(2.1) Localization: Localization of the car with respect to the map (registered high quality map).

(2.2) KPI calculation: Calculation of error metrics between the localization data of the production system and the reference system.

Discussion

Benefits: The pattern can be used with advantage to test localization systems which have to work in indoor environments where GPS is not available.

Limitations:

– The accuracy of the method depends on the physical characteristics of the camera used like resolution and field of view.

– For the test of localization systems that also need a training phase (e.g. some lidar based and similar) the following remark applies: “Using a track for validation that was trained before gives only limited indication about performance of the SUT on untrained tracks”

– For the test of other localization systems that do not need a training phase (e.g. odometric, GPS based) the test results on one test track can be transferred to other untrained test tracks.

Application hints: If the test track lacks of enough visual recognizable landmarks then additional landmarks e.g. 2D matrix codes can be used.

Variants: The method can be adapted for use with different camera systems like stereo or surround camera.

Application Example

ENABLE-S3 Use Case 6 “Valet Parking”: The pattern was applied for the runtime validation of the localization component in the use case Valet Parking.

Relations to other Patterns

| Pattern Name | Relation* |

| Recorded Data Labeling | That pattern serves for labeling camera video data for Ground Truth generation |

| KPI Catalogue Definition | That pattern helps to identify relevant KPIs |

* “this pattern” denotes the pattern described here, “that pattern” denotes the related pattern