MBA-Based Runtime Behavior Verification

Meta-Information

Origin: Mehrdad Saadatmand, Raluca Marinescu, Cristina Seceleanu, Raluca Marinescu / all ALTEN Sweden, Wolfgang Herzner / AIT.

Also Known As: Architectural Consistency Checking

Written: September 2014

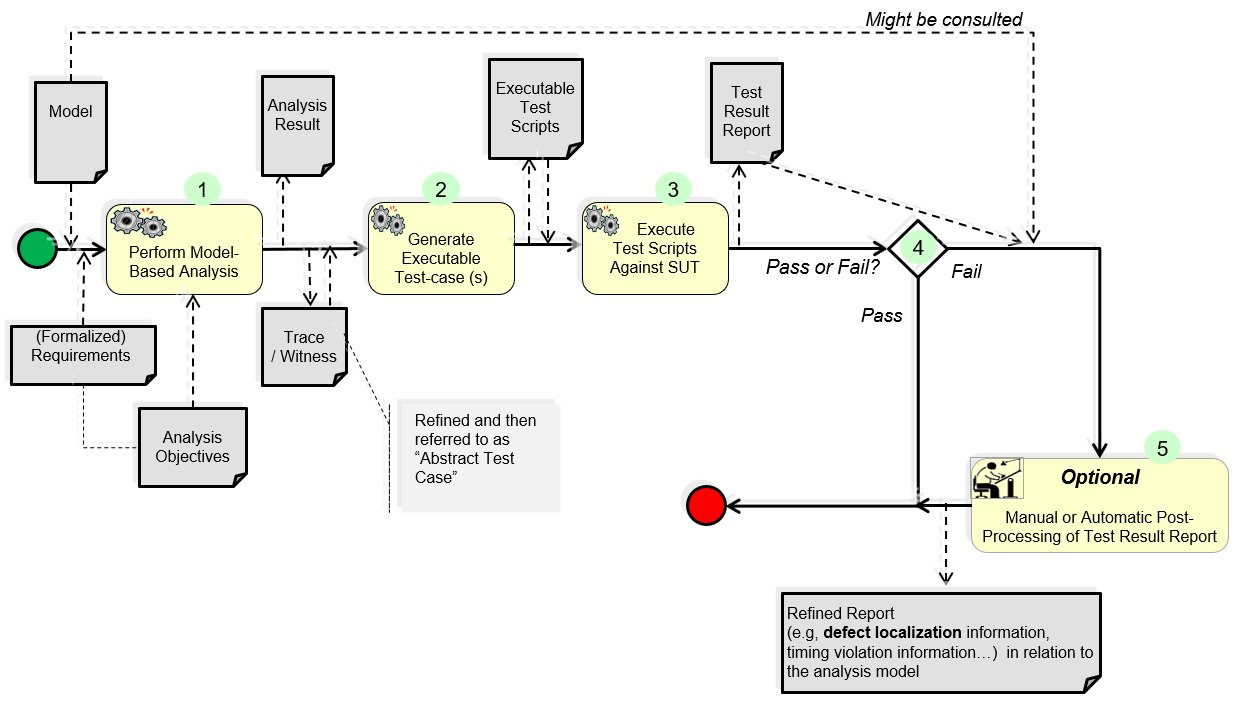

Purpose: to ensure that the runtime behaviour of the system matches the models that are used as the input for static model-based analysis. In performing model-based analysis, some assumptions are taken into account (e.g., WCET,…). By testing a system with respect to these assumptions, it is verified whether they are valid at runtime or not. This is of great importance due to the fact that if the assumptions do not hold, and there is any inconsistency between the models capturing the expected behaviour and the actual behaviour of the system at runtime, the result of model-based analysis may not be valid and applicable anymore for the implemented system.

Context/Pre-Conditions: It should be possible to retrieve a trace/witness from the model-based analysis tool to create an executable test case from.

To consider: the code (developed or automatically generated) needs to be testable with respect to the assumptions and properties that are to be tested. This could be achieved, e.g., by following certain rules in implementing the system. For instance, in the context of the use-case where we have applied this pattern, the code is implemented/instrumented in a way that we can track states and transitions at runtime.

Structure

Participants and Important Artefacts

Analysis objectives: properties that have to be possessed by the test model. They are derived from requirements by the T&A model engineer and may be formalized, e.g. as TCTL properties.

.

Actions / Collaborations

(Some more information can be found under “Application Example“ from this perspective.)

(1) Using the test objectives, the system model is analysed, resulting in traces through the model that represent witness for fulfilment the requirements. These traces represent test cases, but in abstract notation, because they cannot be directly applied to the SUT.

(2) The test engineer turns these abstract test cases (automatically) into executable test cases, typically in form of test scripts, which can be understood by the test environment. This is mostly achieved by a rather straightforward notation transformation.

(3) The test engineer executes the test cases against the SUT, and records the test outcomes in the test reports.

(4) The test engineer evaluates whether tests are passed of failed, typically by comparing reports with the intended results (test oracles).

(5) For failed tests it can be appropriate to post-process the test report manually by the test engineer, in order to provide additional information such as suspected fault location, which were gathered during test result evaluation.

.

Discussion

Implications: The pattern helps to check if the models and systems behaviour at runtime match or not. As a consequence:

– It would also be possible to get information about the vicinity of a problem and trace it back to related parts and elements in the model.

– More test cases can then be generated by TCG tools to target that part of the system in which a deviation from the expected behaviour is observed. In this case, collaboration with TCG tools can also be considered (not shown in the figure).

Comments:

– This pattern seems to resemble “MBT with Test Model Analysis”. However, it uses model analysis for gaining test cases which assure conformance of SUT with test model rather than increasing trust into the test model itself. It further emphasizes difference between abstract test cases and concrete test cases (executable test cases) – in difference to “MBT”.

– For examples, how appropriate abstract test cases can be achieved, see the application example below.

.

Application Examples

MBAT Automotive Use Case 1 (Brake by Wire) / Scenario 5 (TCG based on Test Model)

.

Relations to other Patterns

| Pattern Name | Relation* |

| MBT | This pattern can be used for generating the tests which are applied in “MBT” |

| MBT with Test Model Analysis | That pattern also analyses the test model, but for assuring model correctness rather than for generating test cases |

| Get Confidence In Generated Code | Both patterns complement each other (that pattern can be applied regardless whether the code is fully generated or manually developed) |

| Preserve Requirements across Abstraction Levels | That pattern helps to preserve requirements across abstraction levels |

* “this pattern” denotes the pattern described here, “that pattern” denotes the related pattern